New AI accelerators with novel instruction set architectures (ISAs) often require developers to manually craft low-level kernels — a time-consuming, laborious, and error-prone process that cannot scale across diverse hardware targets. This prevents emerging hardware platforms from reaching the market efficiently. While prior LLM-based code generation has shown promise in mature GPU ecosystems, it remains unclear whether agentic LLM systems can quickly produce valid and efficient kernels for emerging hardware with new ISAs.

We present KernelCraft: the first benchmark to evaluate an LLM agent's ability to generate and optimize low-level kernels for customized accelerators via a function-calling, feedback-driven workflow. Within KernelCraft, the agent refines kernels under ISA and hardware constraints using automated feedback derived from compilation checks, simulation, and correctness validation against ground truth. In our experiments, we assess agent performance across three emerging accelerator platforms on more than 20 ML tasks, each with 5 diverse task configurations, with special evaluation of task configuration complexity. Across four leading reasoning models, top agents produce functionally valid kernels for previously unseen ISAs within a few refinement steps, with optimized kernels that match or outperform template-based compiler baselines. With that, we demonstrate the potential for reducing the cost of kernel development for accelerator designers and kernel developers.

Diagnosis-and-repair loop. Starting from workload/ISA/hardware specifications, the agent writes an assembly kernel that is automatically verified using syntax checks and reference-based functional checks. When mismatches are detected, KernelCraft performs memory-level diff diagnostics and feeds signals back to the agent for iterative patching.

KernelCraft evaluates across three emerging accelerator platforms with diverse ISAs:

| Platform | ISA Type | Toolchain | Execution |

|---|---|---|---|

| PLENA | Custom NPU ISA | PLENA Compiler | Simulator |

| AMD NPU | Custom NPU ISA | Peano Compiler | Hardware |

| Coral NPU | RISC-V + RVV | RISC-V Compiler | Verilator RTL |

We evaluate four frontier reasoning models across 3 accelerator platforms, totalling over 1,100 experiments. Each task is evaluated on 5 configurations; cells show successful/total within the iteration budget per level (15, 20, and 25 iterations for Levels 1–3).

| ID | Task | PLENA | AMD NPU | Coral NPU | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| GPT-5.2 | Gemini-3 | Sonnet 4 | DS-R1 | GPT-5.2 | Gemini-3 | Sonnet 4 | DS-R1 | GPT-5.2 | Gemini-3 | Sonnet 4 | DS-R1 | ||

| Level 1: Primitive Operations (Max 15 iterations) | |||||||||||||

| 1 | SiLU | 5/5 | 5/5 | 2/5 | 0/5 | 1/5 | 1/5 | 0/5 | 0/5 | 3/5 | 3/5 | 0/5 | 0/5 |

| 2 | ReLU | 2/5 | 0/5 | 1/5 | 0/5 | 2/5 | 1/5 | 0/5 | 0/5 | 5/5 | 4/5 | 1/5 | 0/5 |

| 3 | GELU | 4/5 | 4/5 | 1/5 | 0/5 | 1/5 | 2/5 | 0/5 | 0/5 | 5/5 | 5/5 | 0/5 | 0/5 |

| 4 | Softmax | 5/5 | 3/5 | 4/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 4/5 | 2/5 | 0/5 | 0/5 |

| 5 | LayerNorm | 3/5 | 5/5 | 2/5 | 0/5 | 2/5 | 1/5 | 0/5 | 0/5 | 1/5 | 0/5 | 0/5 | 0/5 |

| 6 | RMSNorm | 3/5 | 5/5 | 1/5 | 1/5 | 1/5 | 0/5 | 0/5 | 0/5 | 1/5 | 1/5 | 0/5 | 0/5 |

| 7 | GEMV | 5/5 | 2/5 | 1/5 | 0/5 | 2/5 | 1/5 | 0/5 | 0/5 | 4/5 | 5/5 | 0/5 | 0/5 |

| 8 | GEMM | 4/5 | 2/5 | 0/5 | 0/5 | 4/5 | 3/5 | 2/5 | 1/5 | 2/5 | 4/5 | 1/5 | 1/5 |

| 9 | BatchMatMul | 2/5 | 2/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 |

| 10 | Linear | 4/5 | 2/5 | 0/5 | 0/5 | 3/5 | 2/5 | 1/5 | 0/5 | 2/5 | 0/5 | 0/5 | 0/5 |

| 11 | Conv2D | -- | 0/5 | 0/5 | 0/5 | 0/5 | 2/5 | 1/5 | 0/5 | 0/5 | |||

| 12 | DepthwiseConv | -- | 0/5 | 0/5 | 0/5 | 0/5 | 5/5 | 3/5 | 0/5 | 0/5 | |||

| Level 1 Subtotal | 37/50 | 30/50 | 12/50 | 1/50 | 16/60 | 11/60 | 3/60 | 1/60 | 34/60 | 28/60 | 2/60 | 1/60 | |

| Level 2: Composite Operations (Max 20 iterations) | |||||||||||||

| 13 | RoPE | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | -- | |||

| 14 | FFN | 3/5 | 2/5 | 0/5 | 0/5 | 2/5 | 1/5 | 0/5 | 0/5 | 1/5 | 0/5 | 0/5 | 0/5 |

| 15 | SwiGLU | 4/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 |

| 16 | ScaledDotProduct | 3/5 | 2/5 | 0/5 | 0/5 | 1/5 | 0/5 | 0/5 | 0/5 | -- | |||

| 17 | FlashAttention | 3/5 | 1/5 | 0/5 | 0/5 | -- | -- | ||||||

| 18 | MHA | 3/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | -- | |||

| 19 | GQA | 1/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | -- | |||

| 20 | MQA | 1/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | -- | |||

| Level 2 Subtotal | 18/40 | 5/40 | 0/40 | 0/40 | 3/35 | 1/35 | 0/35 | 0/35 | 1/10 | 0/10 | 0/10 | 0/10 | |

| Level 3: End-to-End System (Max 25 iterations) | |||||||||||||

| 21 | ConvBlock | -- | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 1/5 | 0/5 | 0/5 | |||

| 22 | DecoderBlock (LLaMA) | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | -- | |||

| 23 | DecoderBlock (T5) | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | 0/5 | -- | |||

| Level 3 Subtotal | 0/10 | 0/10 | 0/10 | 0/10 | 0/15 | 0/15 | 0/15 | 0/15 | 0/5 | 1/5 | 0/5 | 0/5 | |

| Total | 55/100 | 35/100 | 12/100 | 1/100 | 19/110 | 12/110 | 3/110 | 1/110 | 35/75 | 29/75 | 2/75 | 1/75 | |

-- = Not officially supported by the platform. Each task evaluated on 5 configurations.

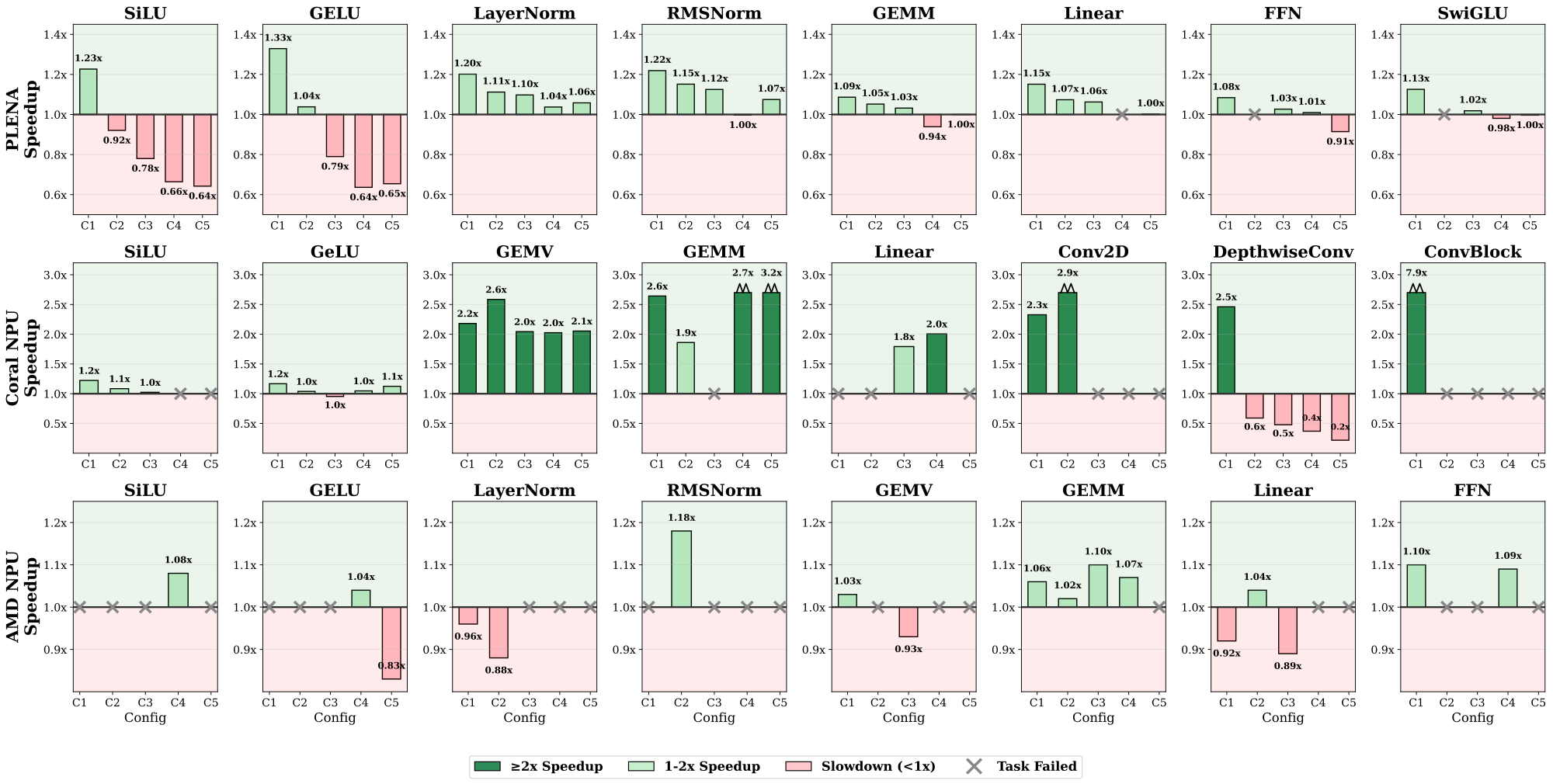

Speedup of best KernelCraft agent's kernels over compiler baselines on representative workloads across three accelerator platforms (PLENA: native compiler, Coral: RVV -O2, AMD: Peano).

Coral NPU exhibits the largest gains (2–8x on GEMV, GEMM, ConvBlock). PLENA normalization tasks achieve consistent 1.06–1.22x speedups. AMD NPU results cluster tightly around baseline (0.89–1.18x).